Today’s increasingly volatile business environment forces organizations to rethink their approach to forecasting and demand management. From a technical point of view, virtually anything is possible. But predictive algorithms and advanced data processing technologies are just one part of the story when it comes to next-generation demand management.

In the first article of this series we discussed the uncertain, complex and volatile nature of today’s business environment and why it calls for a new value chain model. Now, we are going to take a closer look at this new model and its different elements, starting with next-generation demand management.

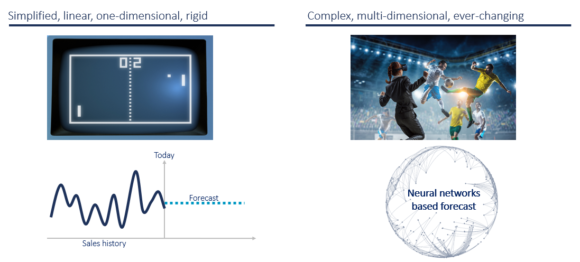

The continuous evolution of machine learning applications in our private and business life is mostly happening quietly but advancing at staggering speed. Image and speech recognition, service agents and medical diagnostics are just a few example areas which are being completely transformed by the use of artificial intelligence. But nowhere is it as tangible as in the world of games and competition. What slowly started with IBM’s Deep Blue beating the chess champion Garry Kasparov (1997), and Google’s AlphaGo beating the Chinese Go champion Ke Jie (2017), is now accelerating and expanding: Google DeepMind’s AlphaZero AI engine has after four hours of training beaten the world-leading chess program Stockfish (2017). By January 2019, DeepMind has trained its engine AlphaStar to beat the world’s best players in the highly complex real-time strategy game Starcraft 2.

Fig.1: Changing environment and evolution of forecasting approaches

Now let us step back and discuss what this means for how we operate forecasting and demand management. The environment has changed fundamentally towards getting more and more complex, multi-dimensional and disruptive; however, forecasting in most companies still uses mainly sell-in data based, one-dimensional statistical forecasting, plus manual input from planners. In other words, we are mainly using technology and algorithms established in the 60s to “machine forecast” a highly complex, dynamic, global marketplace. Beyond that, we are placing high hopes on the human planner to capture the remaining complexity and volatility of the environment based on their judgement and experience, largely without any advanced decision support tools.

Rethinking forecasting and demand management

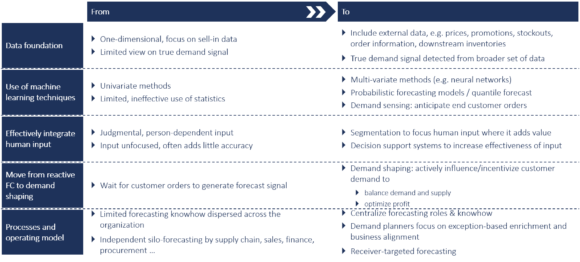

We at CAMELOT know from our own projects and research that companies can make step-change improvements from transitioning to a next-generation demand planning approach. As exhibit 2 illustrates, improvements will be multi-faceted and include the following areas:

- Expanding the data foundation of forecasting with a data lake including internal and external data

- Overcoming the limitations of simple statistical methods by the use of machine-learning techniques such as neural networks and probabilistic forecast models

- Effectively integrating human input with decision support systems

- Moving from reactive forecasting to demand shaping and profit optimization

- Upgrading and professionalizing forecasting processes and operating model

Fig.2: Evolution of forecasting approach and solutions

Achieving the above can help companies not only boost their forecast accuracy, but also capture sales opportunities and optimize their profit margin. Let us look at some examples:

A major automotive supplier has completely transformed the forecasting model by following a segmentation and exception-based-planning approach enabled by machine learning techniques. The result was a reduction of planning effort by more than 50% allowing the planners to focus on critical value add areas, while the forecast accuracy increased and stabilized at a significantly higher level.

A major consumer goods player implemented demand sensing, gathering end-customer demand via the wholesaler’s point-of-sales quantities and prices as well as combining it with price sensitive forecasting techniques. The changes have not only increased the forecast accuracy by >20 percentage points but also boosted the ability for intelligent analytics on promotions and pricing, which helped them make better informed marketing decisions. In addition, a two-step forecasting approach was implemented using quantile forecasting for seasonal products to better predict the seasonal ramp-up and phase-out, resulting in a better forecast for raw materials and capacity usage during the pre-production phase.

How to get there

Predictive algorithms and data-capture & processing technologies have shown such a development that technologically almost everything is possible. It is the challenges regarding organizational set up, capabilities, and data access & infrastructure that hold many companies back from making major steps forward. Below we describe the major areas where companies need to re-think their approach to forecasting and demand management.

- Courage to leapfrog: Often companies prefer to take an incremental approach, and e.g. first introduce statistical forecasting before moving to advanced approaches. Here, the risk is to keep working on the basics forever and to more and more lag behind peers. We have seen in many projects that it is possible to leapfrog old technology (e.g., exponential smoothing) and move right into machine-learning-based forecasting techniques. Piloting new technologies at least in selected areas will not only boost capabilities, but also create a culture of curiosity, learning and innovation that inspires the team.

- Openness for learning: Companies should approach next-generation demand management as a journey of continuous learning and gradual adaptation, not so much as a big bang go-live. New forecasting techniques can run in parallel to the existing processes and give lots of opportunity for understanding deviations and different signals, and gradually exploring their best configuration. While changing operational planning of course needs to be handled with care, there is no reason to not start learning about new technologies and insights in a non-productive environment.

- New team & capabilities: Companies need to move to a demand management team with a mix of capabilities which covers the full cycle from data to decision. The demand planner serves as a business partner and integrates data and business input into an aligned forecast for supply chain, commercial and finance. The data scientist is responsible for effectively developing, using and optimizing algorithms and decision support systems. The data manager is responsible for the quality and continuous development of the data lake. While all three roles should work together as a team, they should be part of different functional homes and communities: Data scientist and manager should be part of a Demand Management Center of Excellence, the demand planner should be part of the business, e.g. the country or regional commercial organization.

- Integrated planning & optimization: Next-generation demand management is not only about forecasting, but about integrated optimization of the demand and supply side. Thus, the more the forecast receivers have moved to integrated planning, the more will they benefit from the new approaches. Otherwise, the risk is to generate signals and insights which cannot be smoothly and quickly translated into decisions and business value. The companies we have seen most benefit from next-generation demand management have a strong integrated business planning process in place (linking supply chain, commercial and financial plan) and take an end-to-end approach to supply chain planning. Full end-to-end accountability for decisions and optimization will boost the value of new demand planning approaches.

- Executing on the strategic data roadmap: Setting up the right data-infrastructure and governance is a major prerequisite to upping the game in forecasting and capturing the value of data. First, it is important to establish the right data architecture as a foundation, including the ability to integrate online and offline channels, and a broad range of data-sources (structured, semi-structured and unstructured). Secondly, a seamless integration of the data lake with a data science platform needs to set up. And thirdly, state-of-the-art methods need to be applied to ensure data is constantly maintained and kept accurate, for example machine-learning-based monitoring of data quality (incl. anomaly detection), data enrichment and self-cleaning.

Companies need to move to next-generation demand management in order to more effectively anticipate changes in a volatile environment. Is your company already applying elements of next -generation demand management? What works well, what are you struggling with? Let us know your feedback and thoughts …